Overview

A complete pipeline for producing and selling AI-generated vocal sample packs. A 3B parameter music model was fine-tuned on curated tracks, paired with a generation and post-production workflow to produce vocals at scale. Each pack contains a full vocal track with separated stems, BPM/key metadata, and word-level lyric timestamps.

Reverse-engineered the inference pipeline and built a full training stack from scratch. Frozen embeddings, gradient checkpointing, 8-bit AdamW, and decoder loss skipping brought VRAM from 40GB+ down to 22GB — trainable on a single RTX 3090 in ~20 hours for 30k steps.

Python API serving the fine-tuned model with supporting post-production tasks: lyrics via LLM tool calling, stem separation (Demucs), BPM/key detection, word-level timestamps (Whisper), and cover art (SD 1.5). Includes a creative seeds system for varied output and reference audio conditioning via MuQ embeddings.

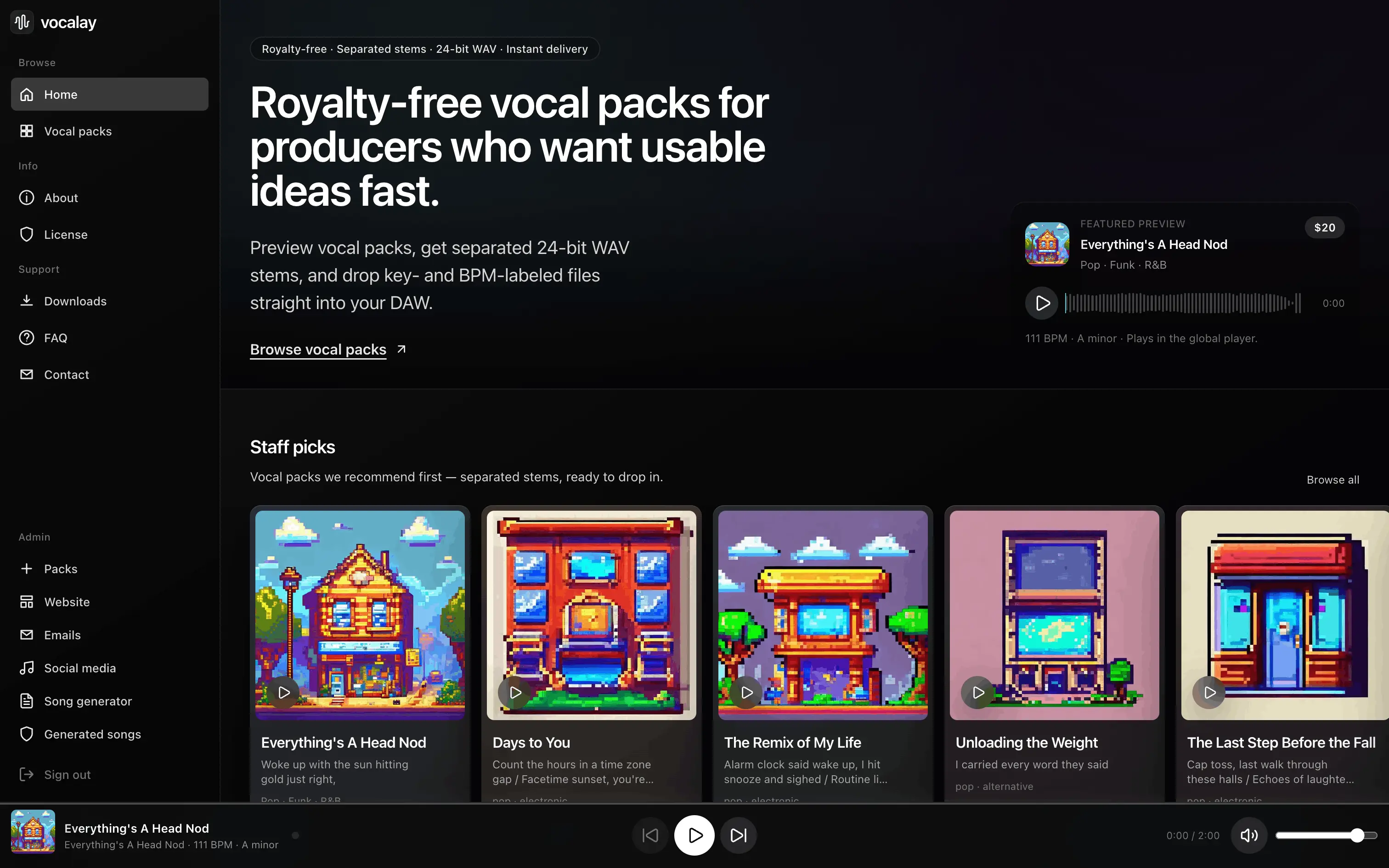

Next.js web app with streaming lyrics generation, reference audio library, batch mode, draft management with inline playback, on-demand stem separation, and a release workflow with auto-generated cover art. Stripe for payments, Neon PostgreSQL for data, Vercel Blob for released audio.